In the intersection of artificial intelligence and human expression, a revolutionary frontier emerges where neural choreography transforms silent sparks of computation into fluid, intentional movement. This deep dive explores how machine learning algorithms decode the intricate language of dance, rhythm, and spatial relationships.

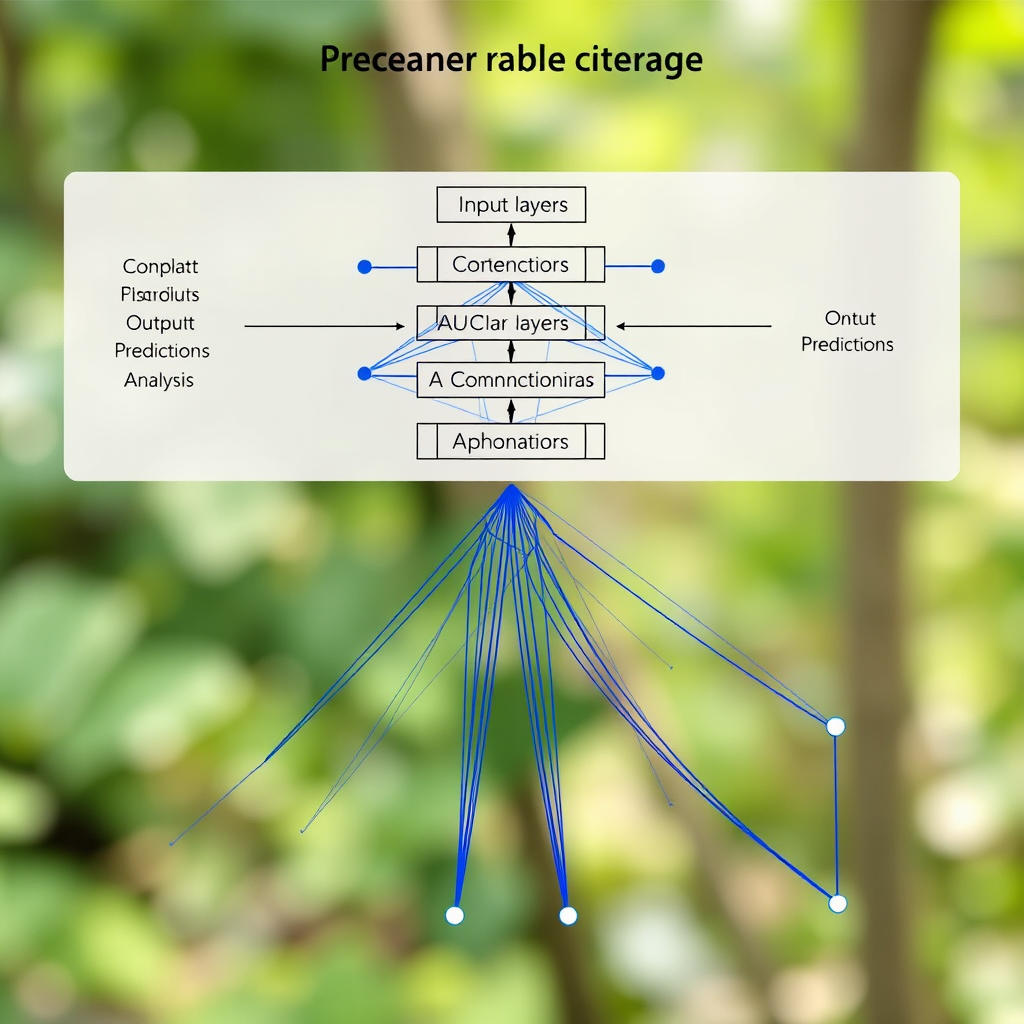

The Architecture of Movement Intelligence

Modern AI systems approach choreographic understanding through sophisticated neural architectures that mirror the complexity of human motor cognition. These networks process movement data through multiple layers of abstraction, beginning with raw positional coordinates and evolving into high-level semantic understanding of artistic intent.

The foundation lies in temporal convolutional networks that capture the rhythmic essence of movement. Unlike traditional computer vision models that analyze static frames, these systems understand motion as a continuous flow of intentional gestures, each carrying emotional and artistic weight.

Dataset Curation: Teaching Machines to See Movement

The quality of movement understanding directly correlates with the richness of training data. Contemporary AI choreography systems learn from vast repositories of motion capture data, video sequences, and annotated performance recordings. Each dataset entry contains not just positional information, but contextual metadata about style, emotion, and artistic intention.

Advanced preprocessing techniques extract meaningful features from raw movement data. Pose estimation algorithms identify key anatomical landmarks, while temporal smoothing filters eliminate noise while preserving the essential character of each gesture. The result is a clean, structured representation of human movement that neural networks can effectively process.

Rhythm Recognition and Temporal Understanding

Perhaps the most challenging aspect of neural choreography lies in understanding rhythm and timing. AI systems must learn to recognize not just what movements occur, but when they occur and how they relate to underlying musical structures. This requires sophisticated attention mechanisms that can focus on relevant temporal patterns while ignoring irrelevant variations.

Recurrent neural networks with long short-term memory (LSTM) cells excel at capturing these temporal dependencies. They learn to anticipate movement sequences, understanding that certain gestures naturally flow into others based on physical constraints and artistic conventions. This temporal awareness enables AI systems to generate coherent, flowing choreographic sequences rather than disconnected individual poses.

Spatial Relationship Modeling

Dance exists in three-dimensional space, and AI systems must understand how bodies move through and interact with their environment. Graph neural networks prove particularly effective for modeling these spatial relationships, treating body parts as nodes in a dynamic graph where edges represent physical connections and spatial proximities.

These models learn to recognize complex spatial patterns: how weight shifts affect balance, how arm positions influence the perception of line and form, and how dancers use space to create dramatic effect. The AI develops an intuitive understanding of kinesphere - the personal space around each performer and how movement expands or contracts within it.

Training Methodologies and Optimization

Training neural networks for choreographic understanding requires specialized loss functions that capture the nuances of movement quality. Traditional pixel-based losses fail to account for the semantic meaning of gestures, leading to technically accurate but artistically hollow results.

Advanced training approaches incorporate perceptual losses that evaluate movement quality from a human perspective. These systems learn to optimize for aesthetic criteria: smoothness of transitions, clarity of intention, and emotional resonance. Adversarial training techniques pit generation networks against discriminator networks trained to distinguish between human and AI-generated choreography.

The Future of Artificial Creativity

As neural choreography systems continue to evolve, they promise to new forms of artistic expression that blend human creativity with computational precision. These AI systems don't replace human choreographers but rather serve as collaborative partners, offering new perspectives on movement and expanding the vocabulary of dance.

The integration of intent-driven visuals and cinematic abstraction in AI-generated choreography represents a paradigm shift in how we understand the relationship between technology and art. Through silent creation processes, these systems demonstrate that artificial intelligence can capture and express the ineffable qualities that make movement truly meaningful.

The choreography of the mind continues to evolve, orchestrating motion from silent sparks of neural activity into profound expressions of artificial creativity. As we advance deeper into this frontier, the boundary between human and machine artistry becomes increasingly fluid, opening new possibilities for collaborative creation that neither could achieve alone.